How Pre-Program Diagnostics Improve Leadership Workshop Effectiveness

Most professional development workshops begin with introductions, overview presentations, and general discussions about participants' roles and challenges. These activities help build rapport, but they consume valuable program time that could be spent on skill development and practical application.

The deeper problem is that they assume participants arrive with similar baseline knowledge and face comparable challenges. In reality, professional development participants typically bring diverse experience levels, different organisational contexts, and varied specific needs that generic content can't address effectively. Pre-program diagnostics offer a systematic way to close that gap – and the investment pays dividends in participant engagement, program relevance, and client satisfaction.

The Problem with Generic Professional Development

Without diagnostic information, facilitators face an impossible choice: pitch content at a level that works for the least experienced participants (disengaging those with more experience), or target more advanced content (leaving newer practitioners behind). Mixed-experience groups make this particularly challenging.

Workshop time constraints make it worse. A two-day program can't cover every relevant topic comprehensively. Without understanding participant priorities, the choices facilitators make about depth versus breadth may not align with what participants actually need. Individual participants often have specific challenges that generic content addresses only superficially – a manager struggling with team motivation needs different support than one navigating strategic communication or conflict resolution.

Pre- and post-training assessments remain among the most reliable ways to evaluate whether participants have gained necessary skills – and the data they generate provides a clear picture of knowledge gained during the program (ProProfs Training, 2025). Running a pre-program diagnostic extends this principle further back: rather than discovering what participants didn't know after delivery, you understand what they need before you design or finalise the program.

What Diagnostic Information to Gather

Different types of diagnostic information serve different customisation purposes. The most useful fall into four categories:

Skill-level assessment – helps facilitators adjust content complexity and identify appropriate starting points for the group. Knowing that most participants have foundational knowledge frees you to move quickly past basics. Knowing that experience levels are mixed helps you design tiered activities.

Situational context – reveals the organisational environments where participants apply what they learn. Whether participants lead remote teams, work in regulated industries, or manage rapid change shapes which examples and scenarios will resonate.

Individual challenge identification – uncovers the specific situations participants find most difficult. This information lets you prioritise content areas and design application exercises around genuine workplace challenges rather than hypothetical ones.

Goal-setting information – clarifies what participants hope to achieve. When you understand individual goals, you can align content and activities with them, and participants can see clearly why each element of the program is relevant to them.

Designing Effective Diagnostics

The most common mistake in diagnostic design is gathering more information than you'll actually use. Every question should connect to a specific way you'll adapt the program or facilitate differently. If you can't articulate how a question will change your approach, cut it.

A few practical design principles:

Keep it focused and efficient. A diagnostic that takes more than 15–20 minutes to complete will see lower participation rates. Respect participants' time by asking only what you genuinely need.

Explain how responses will be used. Participants are more likely to give thoughtful, useful responses when they understand the direct connection between their answers and their workshop experience. A brief note explaining that their responses will shape case studies, group composition, or content priorities significantly improves both completion rates and response quality.

Time it appropriately. Two to three weeks before the program is typically optimal – early enough to use the data in preparation, but recent enough that responses reflect participants' current situations rather than contexts they've since moved on from.

Offer something in return. Completion incentives such as a personalised insight report, recommended reading list, or individual coaching conversation during the program increase participation and demonstrate that the diagnostic is genuinely useful, not just a data collection exercise.

What to Do with the Data

Diagnostic information enables several levels of customisation that don't require building a completely new program for each cohort.

Content examples and case studies can be adapted to reflect participant industries, organisational contexts, and the specific challenges identified in responses. This is often the highest-impact change – the same conceptual content lands very differently when the examples come from participants' own sectors.

Activity selection and sequencing can prioritise exercises that address the most common participant challenges. If diagnostics reveal that most participants struggle with delegation, more program time goes there. If most already have strong delegation skills but struggle with upward influence, you can move quickly past one and spend more time on the other.

Group composition for exercises and discussions can leverage participant diversity deliberately – mixing experience levels, industries, or challenge areas in ways that provide mutual learning opportunities rather than just convenience.

Individual coaching moments during the program can address specific situations participants identified, providing personalised value within a group setting without requiring separate one-on-one time.

Connecting Diagnostics to Program Delivery

The diagnostic should feel like the beginning of the program, not a separate administrative step. Participants should see a clear connection between what they shared and what happens in the room.

Reference diagnostic responses during activities to demonstrate personalisation and help participants connect learning to their specific situations. Use diagnostic information to structure introductions so participants share development goals rather than just job titles – this maintains relationship-building benefits while providing more useful information to the group.

Follow up on specific diagnostic responses through individual check-ins during the program, allowing you to address situations participants mentioned and provide targeted guidance without taking group time.

Measuring Whether Diagnostics Are Working

The impact of pre-program diagnostics is measurable, even if it requires some deliberate tracking:

The most meaningful measure is application success – whether participants apply workshop learning more effectively when content has been customised. This is also the measure that matters most to corporate clients evaluating return on their training investment.

Frequently Asked Questions

How long should a pre-program diagnostic take to complete?

Aim for 10–20 minutes. Beyond that, completion rates drop and you risk creating a negative first impression about your program's administrative demands. If you have more questions than can fit in that window, prioritise the ones that will most influence your program design decisions.

What if completion rates are low?

Low completion rates usually indicate one of three things: the diagnostic arrived too close to the program start date, participants don't understand why it matters, or it's too long. Address the first two before shortening the diagnostic – a well-communicated, timely diagnostic will see better completion than a shorter one that participants don't understand the purpose of.

Should diagnostics be anonymous?

Generally no – named responses allow you to tailor individual coaching moments and group compositions in ways that anonymous data can't support. The exception is when you're asking about sensitive organisational dynamics or interpersonal challenges where participants might self-censor if identified.

How do I use diagnostic data without making participants feel exposed?

Aggregate trends in front of the group ("a number of you mentioned challenges with X") and address individual situations in one-on-one moments. This allows you to demonstrate personalisation without making specific participants feel singled out for their responses.

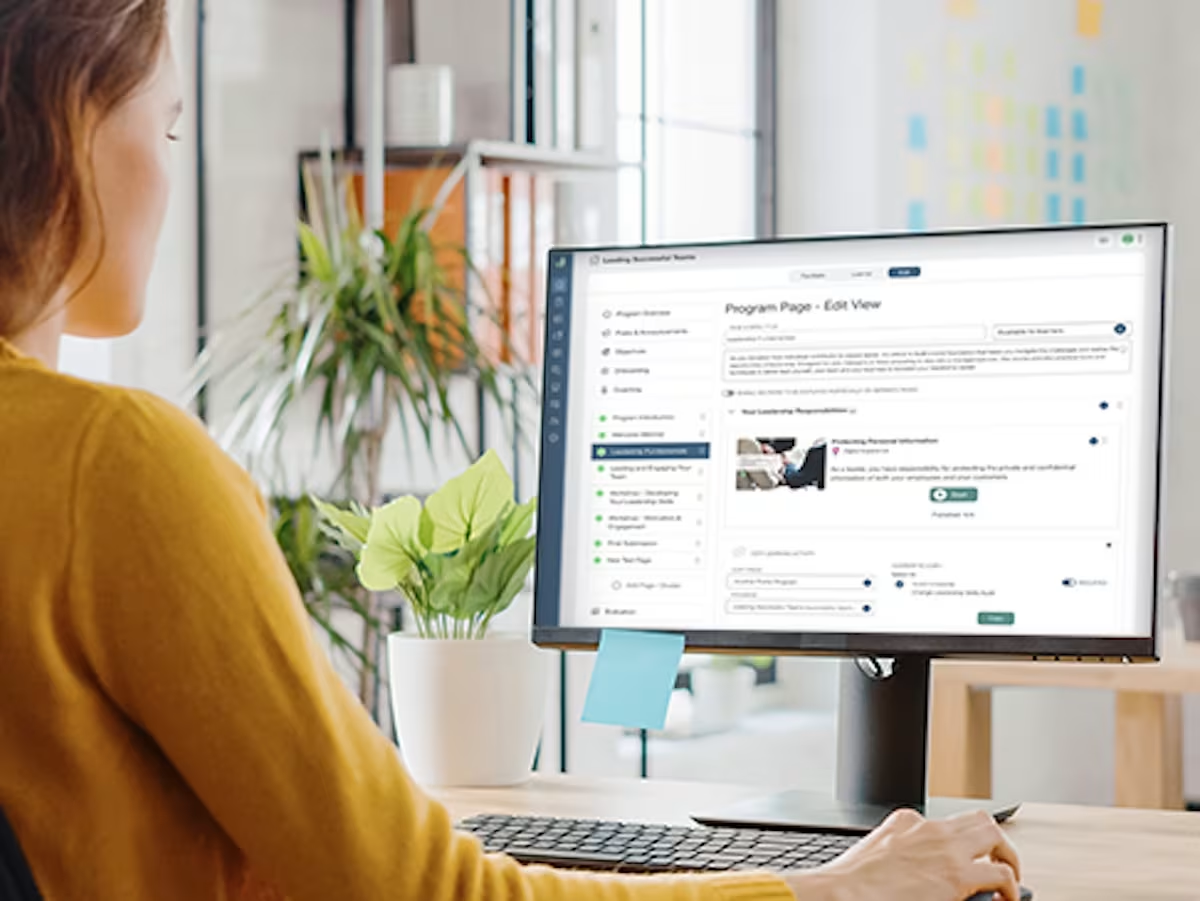

Does Guroo Academy support pre-program diagnostics?

Yes – Guroo Academy includes built-in capability diagnostics and pre-program assessment tools designed to support program customisation for professional development cohorts. Book a demo below to see how it works in practice.

Ready to see Guroo Academy in action?

Book a demo and see how Guroo Academy supports every part of your training business, from program delivery to B2B sales and finance management.