Test, Learn, Improve: A Practical Guide to A/B Testing Your Short Courses

Updated April 2026.

Most course improvements are based on intuition, participant feedback, or general best practice. These have value – but they leave a lot of improvement potential on the table. A/B testing gives you something more reliable: objective data about which specific elements produce better learning outcomes, higher engagement, and stronger enrolment.

This guide covers how to apply A/B testing to both your course design and your course marketing, and how to interpret results when you're working with smaller cohort sizes.

What A/B Testing Means in a Training Context

A/B testing involves comparing two versions of something to determine which performs better against a specific measure. Version A is shown to one group, Version B to another, and you measure the outcome you care about.

In marketing, A/B testing is well established. Companies that test regularly see more than one-third improvement in conversion rates – yet only 17% of marketers actively A/B test (Genesys Growth, 2026). The gap between what's possible through systematic testing and what most organisations actually do is significant.

In course design, the same principle applies – but the metrics shift. Rather than optimising conversion rates alone, educational A/B testing considers:

- Learning outcomes

- Participant engagement

- Completion rates

- Application of skills back in the workplace

The goal is to improve learning for all participants, not just improve business metrics.

What You Can Test in Course Design

Course structure and sequencing

Test whether foundational concepts work better as an upfront block or distributed throughout the course. Some participants learn better with theoretical frameworks before practical application; others need the experience first, then the conceptual framing.

Delivery methods

Compare:

- Video presentations vs written materials

- Interactive exercises vs passive content

- Individual work vs collaborative activities

Delivery mode has a significant impact on both engagement and retention, and the best approach often varies by content type and audience.

Assessment approaches

Test different assessment formats, including:

- Practical application tasks vs theoretical knowledge checks

- Peer assessment vs facilitator evaluation

- Formative feedback throughout vs summative assessment at the end

Assessment design affects both learning outcomes and completion rates.

Introduction and onboarding

How you open a course shapes participants' expectations and motivation from the start. Consider testing:

Version A – learning objectives, course structure, and expectations presented upfront. A traditional approach that establishes clear framework from the start.

Version B – a real-world scenario video showing why the course content matters, with objectives and structure introduced in context. This approach prioritises relevance and motivation.

Measure engagement in the first week, completion of early modules, and participant feedback about course relevance and clarity. Results will tell you whether your audience prefers structure or story as their entry point – and the answer often varies by professional background and experience level.

What You Can Test in Course Marketing

Marketing elements are generally easier to A/B test than course design, because sample sizes are larger and the outcomes are more immediate to measure. Good candidates include:

Email subject lines – test different angles such as benefit-led vs curiosity-led, specific vs general, or question-based vs statement-based. Subject lines have a measurable impact on open rates, which affects how many people see your course promotion in the first place.

Landing page copy – landing pages written at a 5th to 7th grade reading level convert at 11.1% – more than double the rate of professional-level writing at 5.3% (Unbounce Conversion Benchmark Report, 2024). Simpler, clearer language almost always outperforms dense copy – even for professional audiences.

Course descriptions – test different framings such as outcome-focused vs process-focused, benefit-led vs problem-led, or with and without specific social proof elements.

Registration processes – test the number of fields, the information you ask for, and whether a multi-step form performs better than a single-page form.

Preview materials – test which sample content, instructor introductions, or participant testimonials best communicate course value and set appropriate expectations.

Working with Small Sample Sizes

Professional training programs often work with smaller cohort sizes that make traditional statistical significance harder to achieve. This doesn't make testing pointless – it just requires a different approach:

- Focus on effect sizes, not just statistical significance. A large difference between two versions may be worth implementing even without statistical significance, particularly if it aligns with participant feedback and makes practical sense.

- Accumulate data across multiple deliveries. Consistent patterns across several cohorts provide confidence, even if individual cohorts don't reach significance.

- Use qualitative data alongside quantitative. With smaller groups, participant interviews and structured feedback can provide context that numbers alone can't capture. Numerical improvements that come with decreased satisfaction or increased frustration aren't genuine improvements.

- Test one variable at a time. With limited sample sizes, introducing multiple changes simultaneously makes it impossible to know what drove any difference you observe.

Tools and Tracking

You don't need sophisticated software to run A/B tests for course design. Simple tools work well:

- A spreadsheet to track participant assignments, outcomes, and key metrics

- Your LMS analytics for learner progress, completion rates, and assessment scores by group

- Survey tools for qualitative feedback that complements quantitative data

- For marketing tests, most email platforms and landing page builders include built-in A/B testing functionality

The most important thing is consistency – tracking the same metrics across test groups and documenting both what you tested and what you found, including tests that didn't produce the results you expected.

How to Interpret Results

- Look for patterns, not single data points. With small cohorts, individual results can be misleading. Consistent trends across multiple deliveries are more meaningful than a single strong result.

- Consider practical implications. A version that produces slightly better learning outcomes but requires significantly more facilitation time may not be worth implementing at scale.

- Check whether different approaches work for different participants. Segmented analysis by experience level, industry, or learning preference sometimes reveals there's no single best approach – just different approaches for different groups.

- Document everything. Failed tests are as valuable as successful ones. If you know what doesn't work, you avoid repeating it and can build on the knowledge for future iterations.

Building a Testing Culture

Systematic testing works best as an ongoing practice rather than a one-time project. Building testing into your regular course development and delivery cycle – even small, simple tests – creates a habit of evidence-based improvement that compounds over time.

Start with a single element you're genuinely uncertain about, measure it carefully, and make a decision based on what you find. Over time, this approach transforms course development from educated guessing into systematic improvement.

Frequently Asked Questions

How do I decide what to test first?

Start with elements you're most uncertain about, or where participant feedback suggests inconsistency. Course introductions, assessment formats, and key marketing copy are all good starting points because they have measurable impact and are relatively easy to vary.

How long should I run a test before drawing conclusions?

For course design tests, you typically need to run across at least two to three cohorts before the results are meaningful. For marketing tests with larger traffic volumes, standard guidance is to run until you've reached a predetermined sample size – don't stop early just because one version looks promising.

Can I test with very small groups – for example, under 20 participants?

Yes, but interpret results cautiously. Focus on qualitative patterns and practical significance rather than statistical significance. A test with 15 participants per group won't produce statistically significant results, but it can still reveal whether participants respond differently to two approaches – especially when combined with structured feedback.

What if my test results are inconclusive?

Inconclusive results are still informative – they suggest the element you tested doesn't have a significant impact, which frees you to focus testing resources elsewhere. Document it and move on.

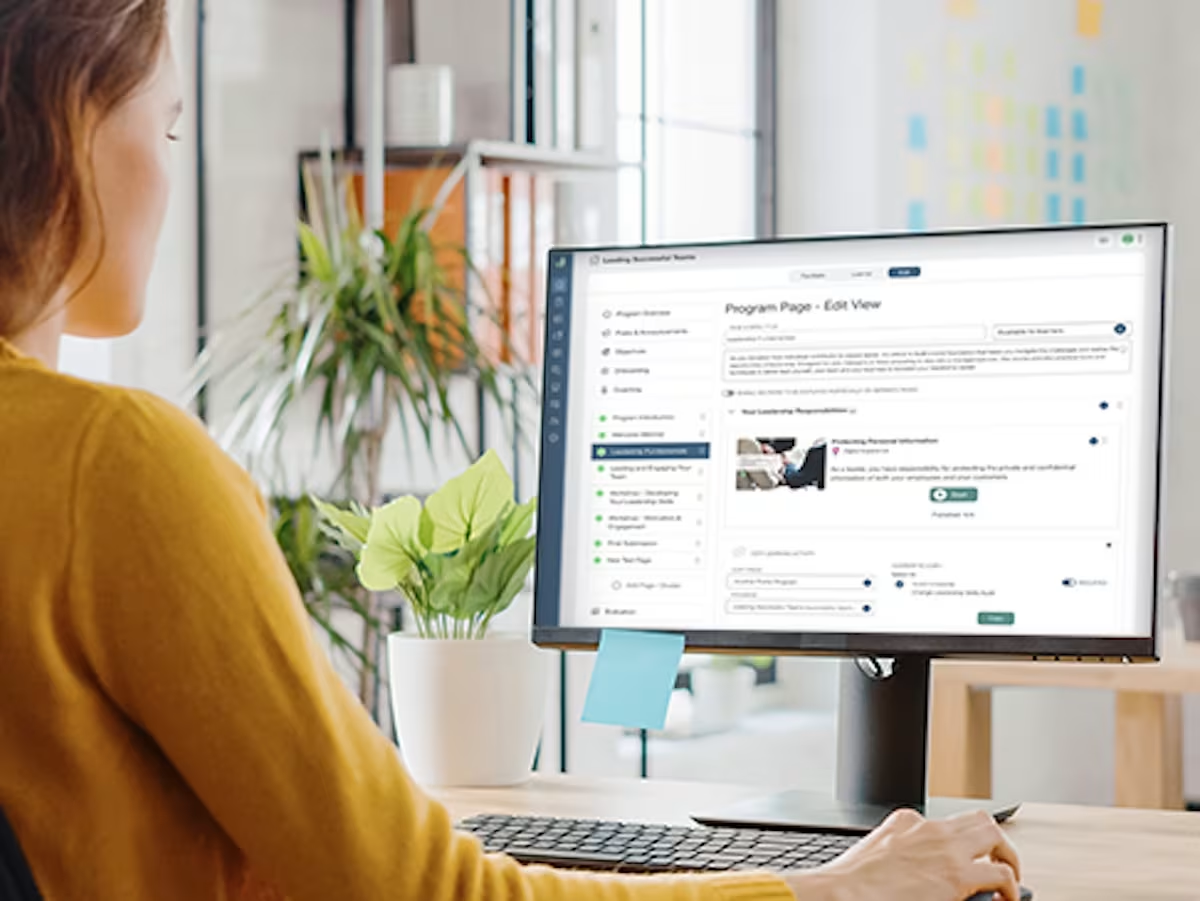

Does Guroo Academy support A/B testing and analytics?

Guroo Academy provides built-in learner progress metrics, completion tracking, and assessment analytics that can support A/B testing across course cohorts. Book a demo below to see how it works in practice.

Ready to see Guroo Academy in action?

Book a demo and see how Guroo Academy supports every part of your training business, from program delivery to B2B sales and finance management.